Deploying A WordPress SaaS Application on Amazon ECS

Amazon Elastic Container Service (ECS) makes it easy to run container-based applications on the AWS cloud. Store Locator Plus® is a SaaS service built on WordPress multisite that provides a directory and location mapping for businesses. The SaaS platform has been running on EC2 instance backed by cloud-based Elastic Block Storage and Elastic Load Balancer. Up to now, these service have been managed via a mostly manual process. While the application is codebase is updated with CI/CD processes, the server images and resulting cluster nodes are updated through the AWS web interfaces.

For 2024, Store Locator Plus® is working toward a modern solution that leverages containers and Amazon ECS to manage the underlying services via an automated process.

Challenges With Hosting Multi-Node WordPress Applications

One of the main challenges with hosting a single WordPress application across multiple compute nodes is the design of WordPress itself. The application was created almost two decades ago when clusters and distributed compute environments were rare. That means that WordPress is mostly designed to run small scale sites, not large multi-user SaaS applications. While SaaS applications are possible, the core of WordPress is much happier running on a single server where all the code, content, and memory cache is in one place. Dividing it up is possible, but it takes planning.

We’ve already done the homework with WordPress multisite running on a horizontally scalable server cluster. Our environment separates persistent objects like images someone uploads from the code. It also ensures the database is running on an independent endpoint which links to a highly scalable RDS instance in multiple zones for both performance and fault tolerance. The only thing that is hosted and running “in memory and on the CPU” is the code. Our service automatically add or subtract nodes on the “compute cluster” which is running the latest Store Locator Plus® code.

Any persistent objects are pushed out to a shared elastic block storage (EBS) backed by S3, ensuring that if a user uploads an image while connected to a server in Virginia, they see the same image in their account when they connect to the app running on a server in California. The same for the database — adding a new location while connected to server “A” does not suddenly go away if your next web session is attached to server “B”.

Thankfully AWS cloud services handles much of this for us once we built the EC2 images and configured the WordPress environment to work with the proper AWS service. The AWS elastic load balancer monitors and manages the nodes and ensures things stay running even when peak load nears the current capacity of the cluster.

It all works great — but is done with custom configurations that are managed “by hand” on AWS Consoles.

Thankfully we know what the challenges are with running WordPress on multiple nodes in the cloud where every node has to look the same to the end user. We are not running hundreds of separate websites here — it is ONE BIG SaaS application where every node needs to be running the same thing. All accounts on all nodes, always.

Moving A Container Development Environment To Amazon ECS

The deployed staging and production applications work well in this environment. Our development team, however, works locally on their laptop. To provide a high performance environment where concepts can be tried and deployed quickly, we have built on a Docker-based development platform. This allows us to support the current running environment using the same exact versions of PHP and WordPress that drive the platform , allowing for routine updates as needed. It also allows us to test things like a new version of PHP and WordPress with our custom SaaS software; Neither WordPress nor PHP are anywhere close to being “fully backward compatible” — especially when it comes to major versions.

However, in the local Docker environments we often connect to developer-specific copy of the RDS database but do NOT use the persistent objects stored on the EBS. We do not configure these environments locally to use EBS as it can slow down the services enough to make rapid development a chore. The same can happen with the cloud-based RDS connections, leaving developers to spin up a “skeleton copy” of the database and load it with fake data.

While this is effective for development, it does not allow us to simply copy over our development environment Docker containers to ECS and deploy identical images between these services. As such an investigation into how to keep consistency between the development and deployment environments is ongoing.

Docker Compose CLI support AWS ECS deployments. Learn more via the Docker Compose CLI documentation.

You can also use Docker Desktop (GUI) and Docker Compose to build and deploy AWS ECS instances.

Amazon ECS Notebook

What follows is my “Research Notebook” about Amazon ECS and what it will take to deploy a WordPress SaaS application on Amazon ECS.

ECS Documentation “Home Page” on AWS.

AWS Supporting Tech

Related AWS services we will likely employ: IAM, EC2 Auto Scaling, Elastic Load Balancing, Elastic Container Registry (new), and possibly CloudFormation (new – like Terraform most likely).

We will likely end up using AWS Key Management Service (KMS) to encrypt and store important things like data access passwords as well — currently managed by Docker environment variables on our local dev boxes.

We will most likely want to leverage AWS CLI locally to help configure and manage our ECS configuration and deployment.

ECS Types of Deployment

ECS – Amazon’s Elastic Container Service can manage 3 types of container deployments : EC2 Instances, Serverless (Fargate), or custom on-premise deployments. For our use case, EC2 instances are the best option. Per the ECS documentation “EC2 is suitable for… applications that need to access persistent storage” — like those shared images we mentioned above.

We will choose EC2 deployments.

Building An AWS Docker Image

To do this without using a self-managed AMI it looks like it will be best to start with a baseline Amazon-created Docker image as the root image for our SaaS service. To keep disruption to a minimum, it should mimic our current environment as closely as possible.

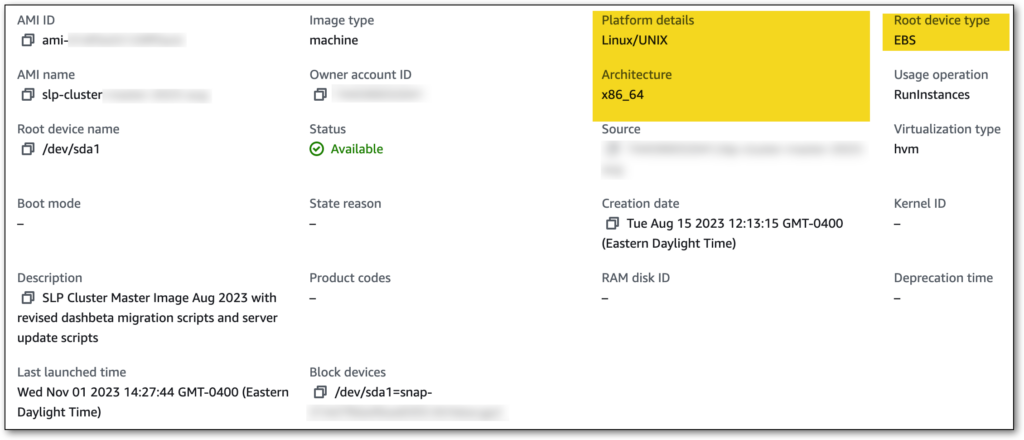

Our current EC2 image properties key details: Linux/UNIX on X86_64 architecture with EBS volumes.

Software stack includes: WordPress 6.X on PHP 7 (soon to be 8) through nginx

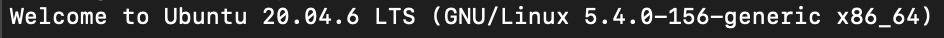

Our OS is Ubuntu 20.04

Setup

Here are the AWS docs on how to build an image on Amazon Linux as the Docker starting image.

- Setting up with Amazon ECR

- Install Docker

- Install AWS CLI

Docker Build

Create a Dockerfile to define the image. Here we want to build on Amazon Linux 2 with nginx.

Persistent Objects

The current setup uses Elastic File Store (EFS) to share the object store among code instances.

ECS support EFS volumes, Docker volumes, and Bind mounts. EFS volumes connect to EFS storage.

We use EFS on the current EC2 setup to share the code AND FILESET on the /var/www directory — this should be changed to only share the uploaded files and main media content.

This AWS article describes requirements for EFS on custom installs.

We will use a revision of the EFS architecture so that only our WordPress uploads directory is in the shared space. Our code stack (SLP code on top of WordPress) and system software stack (PHP 8, nginx, Ubuntu) will be part of our container image so there is no longer a need to share code stack on an EFS volume per the current configuration.

- Create an EFS volume via the EFS Interface on AWS

- Assign to the same VPC the ECS based EC2 instances will be in

- Make sure the security group assigned allows the EC2 instances read/write access to the EFS endpoints

The System Architecture

EC2 – Elastic Compute Cloud

The Docker containers run on an EC2 instance that have a local version of Docker installed. Looks like you need to create an EC2 instance with Docker on it an put it in on a VPC. We will use the VPC we already have setup with a new baseline image.

You can build an test the containers locally on Docker Desktop with Docker Compose and deploy on Amazon ECS using Fargate.

SG – EC2 Security Group

The security group keeps the instances “on lockdown”. It is the network routing and simple IP firewall for the service associated with it. For the EC2 instances in this project you do not require inbound ports to be open, but it is advised to create an SSH rule so you can SSH in to the box and look at what is going on. We will host web servers on there so we will open port 443 (HTTPS) for that.

We will use a new SG: MySLP-ECS-Services (sg-0a…c9)

Inbound Services : HTTP anywhere, SSH my local IP

VPC – Virtual Private Cloud

You will need a VPC to “gather up” all the related ECS resources for this project.

Rather than re-invent the wheel we will use our existing VPC: slp-cluster-vpc (vpc-1b…7c)

Tenancy: Default

IPV4: 172.30/16

IPV6: none

Task Definitions

Tasks (task definitions) define the container image, size of container (CPU, memory), and volume attachments. Looks like it can have scripts, etc. to determine what runs as well as things like when it is “done”.

The application blueprint in a JSON file.

Specify:

- Docker Image (custom, see above)

- CPU and Memory for each task or each container

- 2 VCPU

- 8 GiB

- Launch Type (EC2)

- Docker Network Mode

- awsvpc – own ANI and private IPv4 (matches EC2 instances)

- bridge – docker’s virtual network on Linux, local virtual LAN on instance with bridge to outside

- host – bypass docker network, use host

- none – no external network

- Logging Configuration – default

- Volumes – we will attach the EFS we created earlier for file uploads

- Add a docker volume and give it a name : efs-uploads , Leave root directory as /

- Add a mount point, mount the efs-uploads volume to /var/www/html/wp-content/uploads

Clusters

Define the cloud space where tasks or services run.

These are actually created through CloudFormation behind-the-scenes on the ECS Cluster service.

Clusters provide a cloud space to run the containers. It defines the EC2 instance(s), networking, load balancing and scaling as well as what tasks to run. Tasks run in these clusters.

Services

Services are the running instances of the task definitions (that run on a cluster). A service can then be a service type (long running like a web server maybe?) or a task (short process that ends — or so it seems).

For the service:

- Environment

- Choose the service (slp-saas-test)

- Select a compute configuration – capacity provider or launch type (capacity provider)

- Capacity provider strategy (cluster default)

- Deployment Config

- Application type (service)

- Task definition (slp-saas-test) revision is auto (latest)

- Service name (slp-saas-test-service)

- Service type (Replica)

- Deployment type (Rolling updates)

- Use ECS circuit breaker

- Networking

- VPC (existing slp-cluster-vpc)

- Use existing security group (gwp-vpc-default)

- Load Balancing

- Type: ALB

- New ALB: slp-saas-test-lb

- Container: slp-saas-fe-be 80:80 (might need to change that)

- New Listener: port 443 HTTPS use ACM cert (*.storelocatorplus.com)

- New Target Group: slp-saas-test-tg , HTTPS prot, HTTPS health check, /VERSION.txt check point

- Auto Scaling

- Tasks: min 1, max 5

- Target Tracking

- Policy: slp-saas-test-scaling-policy , ECSServiceAverageCPUUtilization 70%, 300s cooldown

- Task Placement

- AZ balanced spread

Notebook Notes

In the AWS container world via ECS, services (a web server?) are long-running apps. The app the services runs is defined by the task definition which is essentially a Docker container that is a fully self-contained virtual machine running the OS, OS services stack (PHP, nginx), and the app stack (WP, SLP). These services then run in a Cluster which is essentially a definition of the AWS cloud environment (EC2 instances, scaling, balancing) for the “hardware” that will host the containers.

Clusters <- Service <- Task definitions <- Docker containers <- Docker images

When you create a Service in the ECS Cluster, AWS fires up cloud formation. Depending on the options you selected it will create various EC2 services for your app. In this learning exercise it is creating an EC2 instance to host the containers along with a new Auto Scaling Group, Load Balancer, and Target Group.

Initial Attempt On ECS Container Deployment

After doing some homework and making a few small changes it is time to test the container-based WordPress SaaS app.

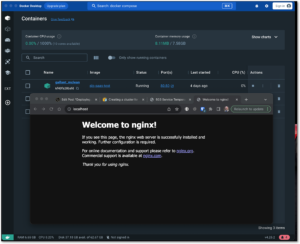

The initial image is built with Ubuntu 20 and nginx with a basic starting home page. It comes up locally via docker desktop, so the image and base web server are running.

However, connecting to the test hostname on something.storelocatorplus.com comes up with a 503 error.

The application load balancer is up and the ports look fine.

The ECS cluster is running an EC2 instance and the instance can be accessed with an SSH login.

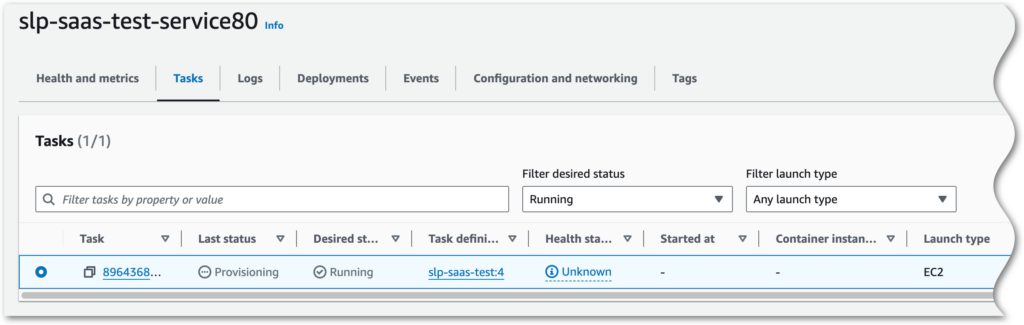

The service on the cluster is also active and in replica state; BUT we do have an issue here…

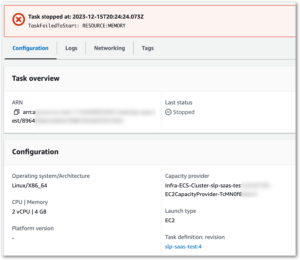

Inside the service it shows 0/1 tasks running.

The task says it is provisioning — something is going on in the task definition.

I’m not sure, but there may be a configuration issue related to memory that is being allocated on the container vs. what is available from the c6i.large (2VCPU / 4GiB) EC2 instance. Thought this was set to use 3.5GiB on the container, time to check params.

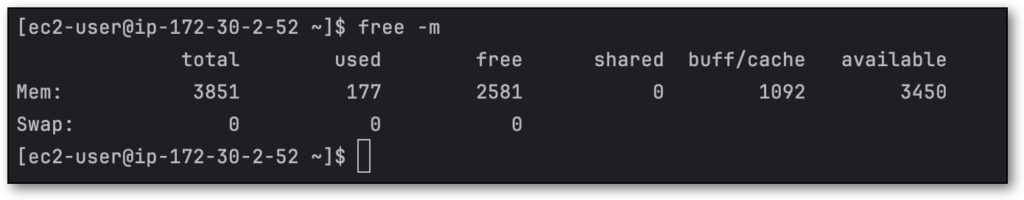

Also – if that is the case, we do not have 3.5GiB available on the EC2 instance. Logging in with ec2-user to the instance shows there is only 2.5GiB available after the OS (Amazon Linux 2) + Docker services are up.

Let’s tune the task requirements down to 2 vCPU and 2.5 GiB, and the container to 2 vCPU, 1 vGPU, 2 GiB hard and soft limits.

Second Attempt : Scaling and Volume Timeout

No more memory errors, but we have a scaling and a time out issue.

Let’s simplify and drop the overly-optimistic attempt to attach an EFS volume and auto-scale. Time to setup a service with no scaling and and task definition with no EFS mounts.

Start with task definition and remove the volumes (EFS mounts).

Now go to clusters and create a new service.

- Capacity provider strat

- Service type app

- Family: slp-saas-test , latest

- Name: slp-saas-test-service

- Replica, 1 desired task, rolling update (all defaults)

- VPC : default

- Security Group: MySLP-ECS-Services

- Add ALB: slp-saas-test-elb (new), new listener, new target group

Time to see what this container / cluster logging does.

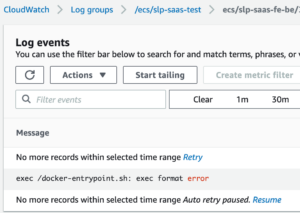

Building on the mac M1 is the issue. From Stack Overflow…

The

exec format errormessage means that you have built your docker image on an ARM system like a Mac M1, and are trying to run it on an X86 system, or the opposite, building it on an X86 computer and trying to run it on an ARM system like an AWS Graviton environment.You either need to use Docker BuildKit to build the image for the environment you intend to run it, or make sure that your build environment and deployment environment have the same CPU architecture.

Mark B on SO

Let’s try building a Gravitron-based (ARM64) cluster using c6g.large instances and launch the service and image (via task definition) there.

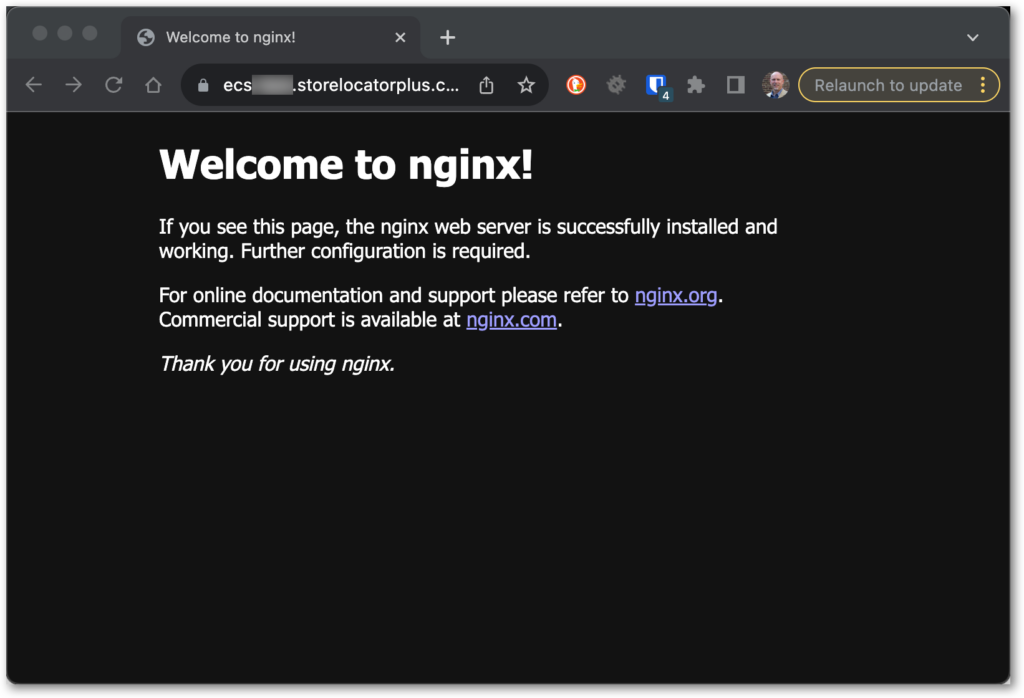

Successful Attempt

The current infrastructure is a container running an image with Ubuntu 22.04.1 with nginx. This is served on an EC2 c6g.xlarge instance (4 vCPU, 8GiB, EBS Store, 10 Gbps bandwidth, 4,750 Mbps EBS bandwidth). It is attached to an application load balancer that is served by an alias mapping from our test hostname (ecs*.storelocatorplus.com) to the ALB. An auto scaling group helps keep capacity where desired.

Here is the general structure from the ECS services standpoint: A cluster running a “web app” service, that runs the task definition as a service (vs. a one-and-done task), that is based on Docker image with Ubuntu and nginx. Building the image with the Linux Arm/64 specification and running that on top of a Linux 2023 Arm/64 (Gravitron EC2 instance) processor helps things along.

Now that we have a container image serving up web pages, it is time to build on this image to serve the WordPress SaaS application via the container and let the ECS services manage our cluster. This will help automate our processes with CI/CD methodologies on both the codebase ant the IT infrastructure moving forward.

Image by Wolfgang Weiser from Pixabay